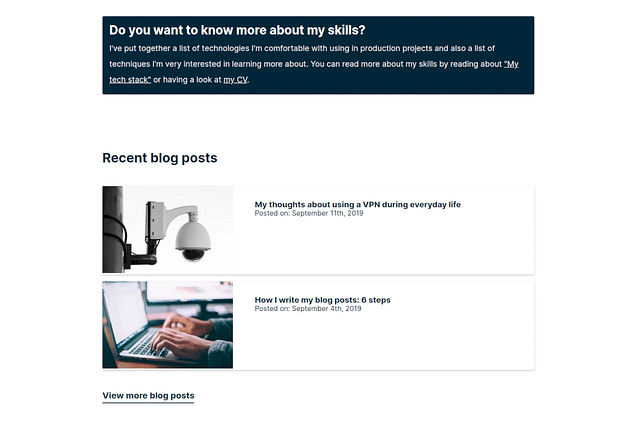

Building event sourced systems is pure joy

In the past few months, I've rediscovered event sourcing and how nice it is to build event sourced systems. I've written about this topic before, in 2019, but have since learned different techniques and approaches. The simplicity of the code you write with event sourcing is something I really enjoy. It allows me to build systems that are flexible and maintainable.

A little warning, I tried to write a nice, short article about this topic, but I got too excited and it became a bit longer than intended. The goal is still the same though, I want to highlight the benefits of event sourcing and how you might find it useful in your own projects. Feel free to skip around, but I recommend reading the whole article to get the full picture.

The "problem" with CRUD

I've chosen this subtitle to highlight a problem with CRUD, not that CRUD is wrong or bad. Just keep that in mind.

After a few years of working on a CRUD app, you'll eventually run into decisions you've made in the past. That's when you'll see just how messy all those business rules and growing complexity can get.

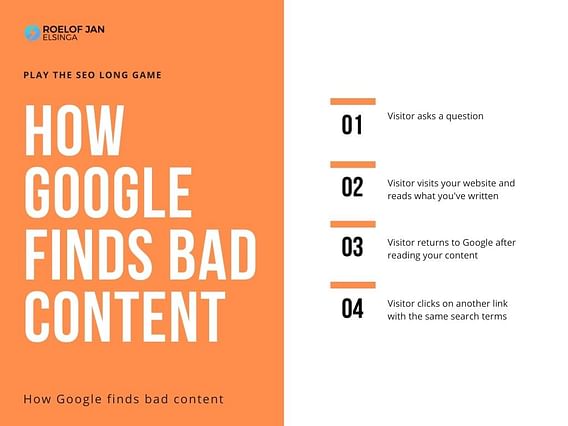

Updating fields in a database row is easy, until you find yourself in a situation where the data no longer makes sense. You have to figure out what was updated, why, and when. Traditional CRUD doesn't have a good way to keep track of these state changes, so debugging takes a lot of time and mental energy.

Of course, there are ways to fix this, but I'll get to those later on.

CRUD is too technical

Ideally, code is nothing more than a representation of how the business works. However, CRUD is too technical for non-technical people.

Picture this: You have a situation where you've got an e-commerce order and want to cancel this order. You'll hear someone say this: "Just delete this order". A junior developer will hear this and will quite literally delete this order. That was the task, so everything is great, right?

Once you get a bit more experience, you'll realize that this is not what the business wanted at all, they just didn't want to see this order in their list of processable orders any more. This order required no more action from the business, and the customer no longer wants the ordered product or service.

If you actually delete the order, you lose all context around it. You don't know who placed the order, when it was placed, or why it was cancelled. You just deleted it. This creates gaps in your data, and you won't remember why in a year.

The business has the best intentions and wants to help you by speaking your words, but they simply don't know how to think through the problem like a software developer. And why should they? This is why you, as a software developer, need to speak to the business in their words. No technical words, no jargon, just plain words.

This is why dev teams have started using techniques like DDD (Domain-Driven Design). DDD is all about understanding the business and its requirements, and then building software that matches that understanding.

Anyone understands what "Order cancelled" means in the context of the business. You don't have to explain what "Deleting an order" means, because that sentence doesn't exist in the real world. You can't undo time and prevent the order from being placed in the first place, but you can cancel it to signal to anyone that no further action is required.

I hear you think: I like your words, funny man, but how does this work in practice? Why wouldn't this work with CRUD?

How is event sourcing different from CRUD?

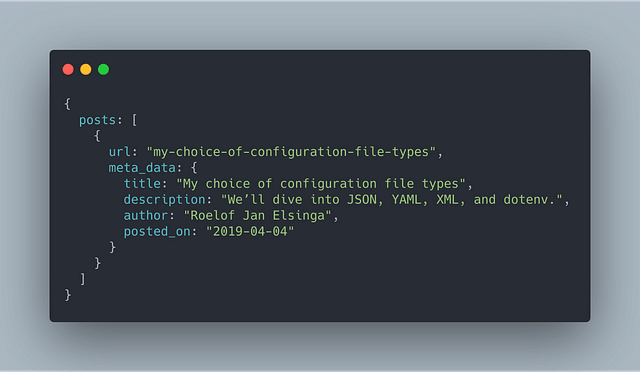

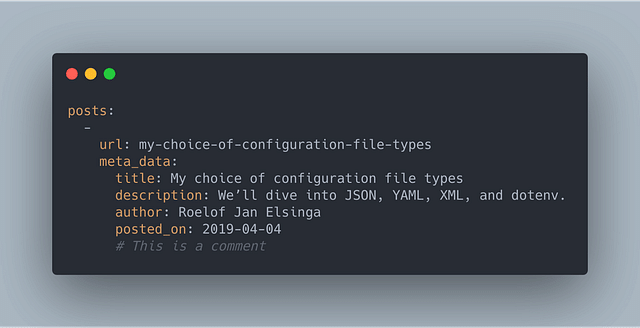

If you're familiar with event sourcing, you might already know this example, but this is the gist of it: In a "normal" application, a CRUD, application, you Create Read Update, and Delete data from your database. This is all good for many use-cases. However, now you get questions from customers, colleagues, or other stakeholders about something that happened in the past. For example: "Who cancelled this order?".

In this CRUD application, you can't answer this question unless you've thought about this feature before you started building your app.

So, you add an audit trail to your application to keep track of these things... Great! But, this only solves the problem for future orders, not past orders. The only news you can share is: "From now on, we know who cancelled the order".

Event sourcing solves this problem for you, because you don't make changes in the database directly, but rather, you keep tracking of "events". Events are facts, indisputable facts. An order was placed, an order was cancelled, and an order was refunded. These are concrete things that have happened. Business rules might change, but these events will always be true.

In the example above, you can refactor your process to keep track of events like this:

OrderPlacedOrderCancelledOrderRefunded

These events can contain metadata, such as the person who performed this action, in which system they performed it, at what time, etc.

Read models

So you have this list of events, now what? How can you actually use this in your application? You can't just give this list of events to your colleages and customers, because they don't care about the events, they care about the current state of the data. They want to know who cancelled the order, not that an OrderCancelled event was created.

So rather than a list of events, you'll want something that represents the current state of your entity. In this case, your order.

You can get to this representation by applying the recorded events to a base state. For example, you can start with an empty order object, and then apply the OrderCreated event to it. This will give you the current state of your order.

Then you can apply the OrderCancelled event to this order object, which will update the state of your order to reflect that it has been cancelled.

This is your base state:

{

"order_id": "12345",

"is_cancelled": false,

"cancelled_by": null

}

Now we can apply some events, such as OrderCancelled, which contains this information:

{

"order_id": "12345",

"employee_id": "67890"

}

This can result in the following state:

{

"order_id": "12345",

"is_cancelled": true,

"cancelled_by": "67890"

}

You apply events to an Aggregate, let's look at that next.

Business rules

Okay, I understand how complicated this sounds compared to a simple CRUD application. But have you ever had to deal with changing business requirements? Perhaps your system should only allow admins to cancel orders.

Your list of business rules will only grow. I've never seen this list get smaller. So when you want to update an order (to cancel it for example), you'll have to be quite careful that your changes make sense and don't accidentally overwrite information you need for other purposes. Accidents happen, the wrong fields get updated, and all of a sudden your data is in a non-functional state.

This process is much easier and less risky with event sourcing.

You can create a new command, for example, CancelOrderCommand. This command will validate all of your business rules, and if they pass, it will create an OrderCancelled event. This event can then be used to update your read models or be processed by your projectors.

Every specific action has its own set of business rules. This example makes your code a lot less cluttered, because all of these rules are in 1 place, the aggregate. The events themselves are nothing more than a record of what happened.

Let me show you how easy these business rules can be by creating a minimal aggregate. I'll use Golang in my example, but you can use any language.

type OrderAggregate struct {

Events []Event

ID string

isCancelled bool

}

func (o *OrderAggregate) HandleCommand(ctx context.Context, command Command) error {

switch cmd := command.(type) {

case *CancelOrderCommand:

if o.isCancelled {

return fmt.Errorf("order %s is already cancelled", o.ID)

}

o.Apply(OrderCancelled{

ID: cmd.ID,

CancelledBy: cmd.AdminID,

})

}

return nil

}

func (o *OrderAggregate) Apply(event Event) {

o.Events = append(o.Events, e)

switch e := event.(type) {

case *OrderCancelled:

o.isCancelled = true

}

}

The aggregate has 2 functions: HandleCommand and Apply. The HandleCommand function is where you validate the business rules by handling commands, in this case, the CancelOrderCommand. It checks if the order is already cancelled, and if not, it applies the OrderCancelled event to the aggregate. The Apply function is where you apply the event to the aggregate, updating its in-memory state. This in-memory state only contains the information you need to handle the command.

If, at some point, you find that your aggregate is getting too large, you can always refactor the code to fit your needs. The sole goal of the aggregate is to make a decision based on the command and apply the event to the aggregate.

Storing the events

After applying the event to the aggregate, you can store the resulting events in your event store. These events are now records of facts.

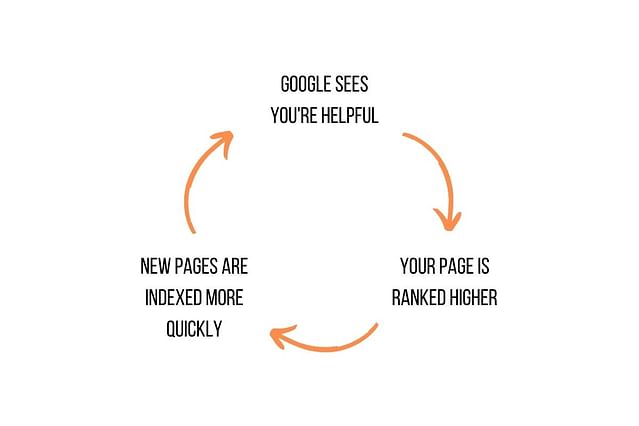

Why is this so powerful? Because the list of events doesn't have an opinion. It's just a record of what happened. If your business logic has changed at some point, it doesn't mean the cancelled orders are no longer cancelled. They were cancelled, because the business said they were at that time.

The events are immutable, they can't be changed. This means you can always trust the data you have because it will never change. If you want to undo an action, you'll have to create a new event that represents the business action, such as OrderReinstated.

By using these events in this way, you have the full context of the order, which you wouldn't have had in a CRUD application. In a CRUD application, you wouldn't know if this order was ever cancelled, unless you had the foresight to include an audit trail from the start or use fields like reinstated_at and reinstated_by. But even then, you'll always lose information and context when you update fields on the order.

This is why event sourcing is so powerful. It allows you to build systems that are flexible, maintainable, and resilient to change.

Read models, projectors, and live read models

In the previous section, I mentioned read models and projectors. There are 2 types of read models: projected read models and live read models.

A projected read model is almost identical to the data you'll find in a CRUD system: It can be a simple row in a database or data you fetch from any API. These projected read models are created using projectors or can be data from an external system.

Projectors listen to specific events and perform some action with this information. In this case, we can set the is_cancelled to true and cancelled_by to the current employee ID, then store this information in a database.

Live read models are a little more complex, but also very powerful. Live read models are an in-memory representation of your entity, in this case, your order. When fetching your order, what you really do is, you fetch a list of events for this order. Then you apply each of these events to the base state. Instead of making this order available as a row in a database, it's a result of all the events that have taken place. It's very similar to how the aggregate works.

Why read models and projectors are so powerful

Read models and projectors are one of the greatest tools in event sourcing, in my opinion. Why? Well, you can very quickly add new features to your application, based on historical events. A great example of this is reporting.

By using events as your source of truth, you can create reports like "How many orders were cancelled in the last month?" or "How many orders were placed by a specific customer in december last year?". Or even more specific: "How many orders were cancelled and then reinstated by a specific customer this year?".

Can you do this with CRUD? Sure, but you'll have to write a lot of SQL queries and create indexes to make this work. With event sourcing, you can fetch the events you're interested in and apply them to a read model that represents the data you need. This is much more efficient and easier to maintain. Forgot a field in your read model? No problem, just add it and rebuild it.

Seeing the data as it was at a specific point in time

One huge benefit of live read models is that you can see the data as it was at a specific point in time. This is something you can't do with CRUD, because CRUD always gives you the latest version of the data. The same goes for most API responses.

Most of the time, this is completely fine. However, when you're working on a bug that affected the system last week, you'll want to see all data as it was at that point in time. If colleagues or customers have changed parts of the data since then, this might have resolved the bug. This means you can't reproduce it, so you close the task, and you'll have to wait for the next time it happens.

Another great example is product pricing. If a customer contacted you last week about ordering a product, but the price has changed since then, you'll want to see the price as it was last week. This way, you can honor the price they saw last week, even if the price has changed since then.

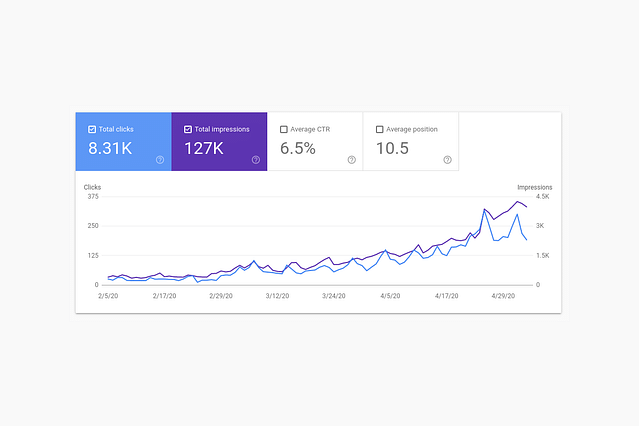

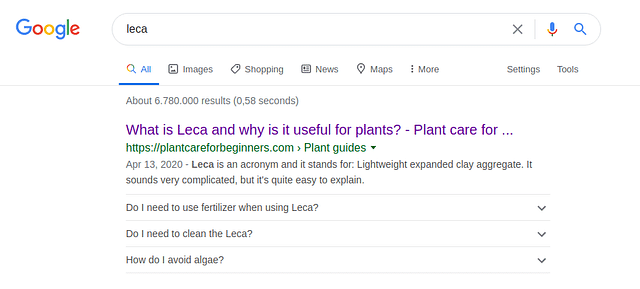

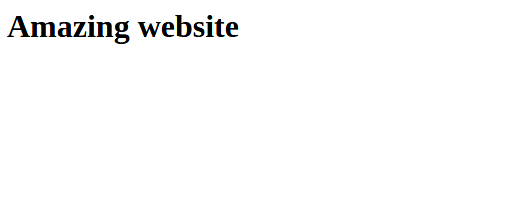

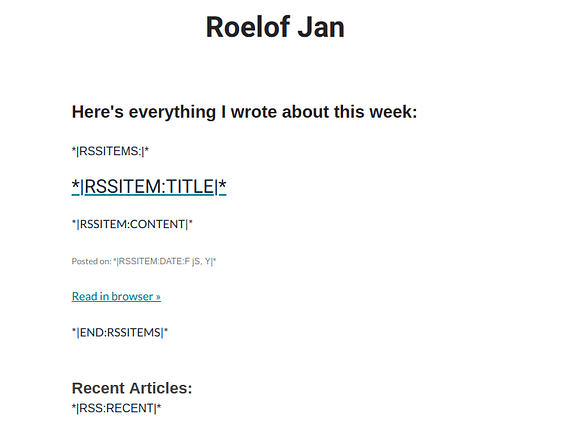

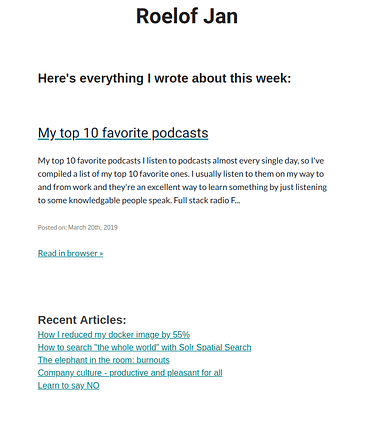

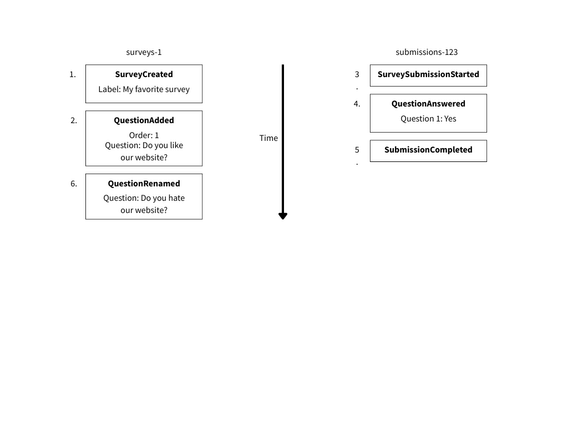

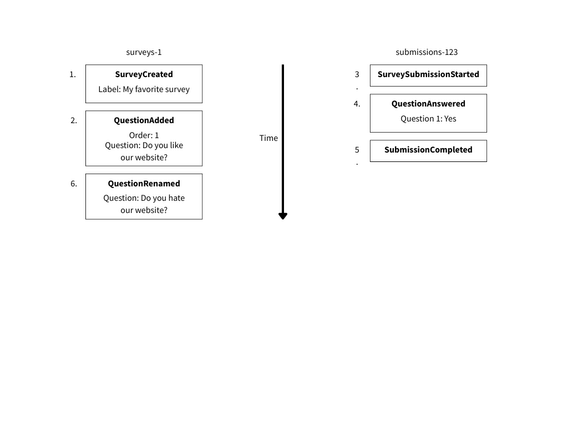

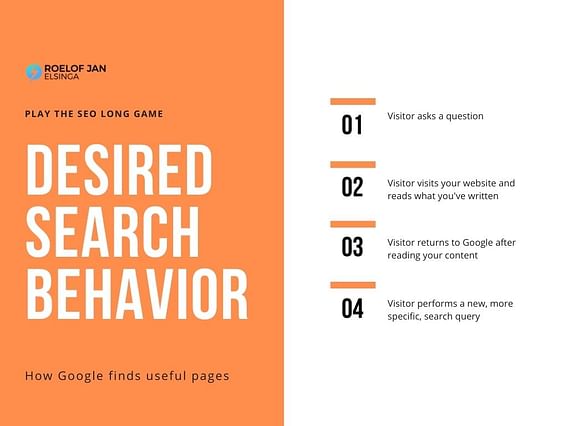

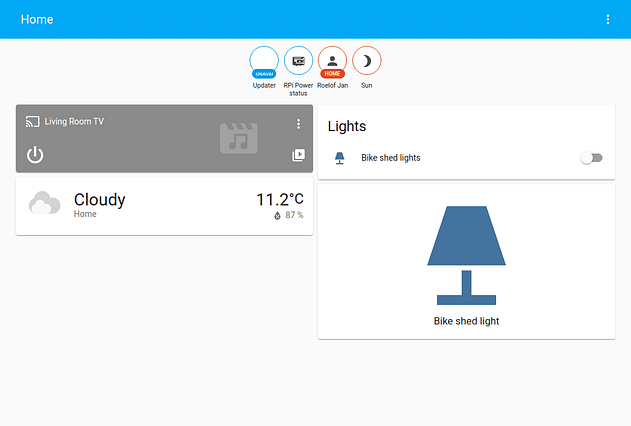

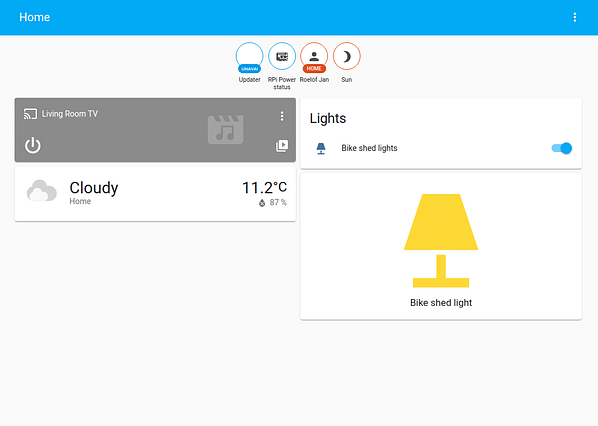

When you look at the image above, you'll see that the visitor answered the question "Do you like this website?" positively with "Yes". However, afterwards, the question was changed to "Do you hate our website?". In a CRUD application, you would only see the latest version of the question, which is "Do you hate our website?". In an event sourced application, you can see the question as it was at the time the visitor answered it, which was "Do you like this website?".

Won't this cause performance issues?

I already see you getting worried about performance. After all, if you have to apply all of these events to get the current state of your order, won't this cause performance issues? The answer is: not necessarily. Sure, if you have millions of records you'll need to apply for a single entity, you'll probably have to wait a few seconds.

Just like any application, you'll have to think about which stream (list of events) makes sense for your use case. Let me use Omoik as an example.

Real life example: Omoik

Omoik has surveys and surveys have submissions. You'll want to track statistics, like "How many people have seen my survey?", "How many people have submitted my survey?", etc.

In the beginning, I thought about the right setup and concluded that I will store these submissions in the same stream as the survey. A little foreshadowing: That was a bad idea.

This stream became very large very quickly, causing performance issues when fetching the survey.

I thought I needed information from the survey to process the submissions, so it's all part of the same stream, right? Wrong.

Instead, each survey gets its own stream, and each submission gets its own stream. This setup causes every stream to be a maximum of 20 events or so, which is processed within a millisecond. This way, I can fetch the survey and the submissions separately, without having to apply all of the events in the survey stream. Since the event store keeps track of the global event ID, I can fetch live read models for the survey up to the point at which the submission was created.

So no matter when I process the survey submissions, I always grab the most up-to-date survey information at that point in time. This way, I know what the website visitor saw when they submitted the survey, and I can use this information to process the submission correctly.

Code changes don't break the past

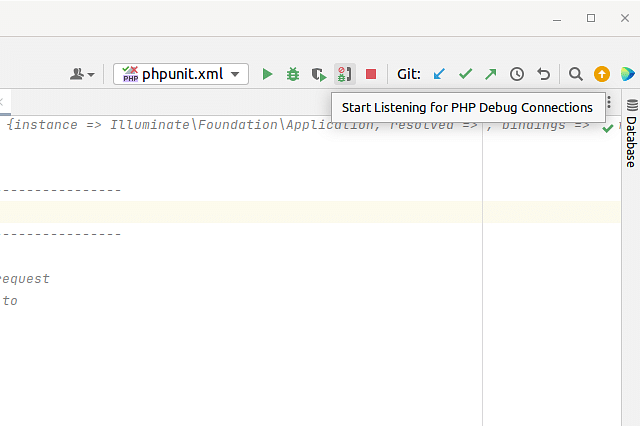

When you get started with event sourcing, you'll either use some type of library or framework to help you set it all up. Or, you roll something yourself. Either way, you'll have to write some code to handle the events and commands.

You won't get it right the first time. You'll write code that works, but then you'll realize that you need to change something. And none of that is a problem, because the events are immutable. You can quite literally rewrite the entire code base, but the events will still be there, unchanged.

I've rewritten the code for Omoik several times now, and every time I do, I learn something new. I find better ways to handle events, commands, and aggregates. I find better ways to structure my code and make it more maintainable. I'm never scared I break something, because I still have the events. Did the projectors stop working or create incorrect read models? No problem, I can fix the projector and replay the events to rebuild the read models.

Once you start to understand that, you'll realize how much fun it is to break and rebuild your systems. You can experiment with new ideas, try out new libraries, and see how they work with your events.

Definitely not something you can do with CRUD without making a backup of your database first.

Conclusion

I can go on forever, but I think I've made my point. Event sourcing is a powerful technique that allows you to build flexible, maintainable, and resilient systems. It helps you keep track of the history of your data, making it easier to understand and debug your application.

If you're not using event sourcing yet, I highly recommend giving it a try. It might take some time to get used to, but once you do, you'll wonder how you ever lived without it.

]]>

CLI applications are a great way to pack a lot of functionality into a little package and perform tasks very effectively and predictably. The CLI application to index documents in Apache Solr didn't start out that way. The 2 applications I mentioned in the intro were designed to each take over a small part of this process. I used both applications as filters to reduce the amount of data going through PHP. After merging these applications, it turned out that 60% of the entire process was now in Go and the performance boost was significant.

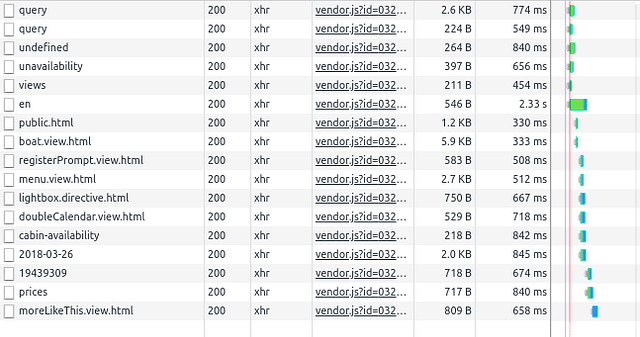

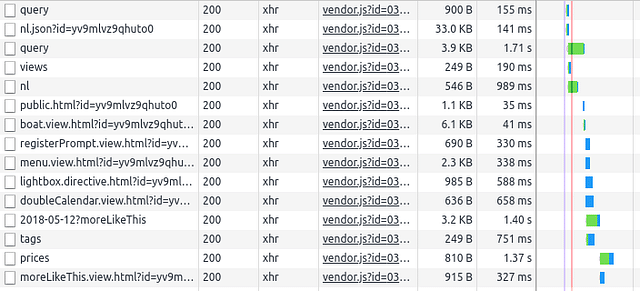

CLI applications are a great way to pack a lot of functionality into a little package and perform tasks very effectively and predictably. The CLI application to index documents in Apache Solr didn't start out that way. The 2 applications I mentioned in the intro were designed to each take over a small part of this process. I used both applications as filters to reduce the amount of data going through PHP. After merging these applications, it turned out that 60% of the entire process was now in Go and the performance boost was significant.  GraphQL is an amazing abstraction layer for your API enpoints. You can abstract many different systems without exposing these to the consumer of your API. Another benefit is that you can point your API consumers to a single, self-documenting, place to retrieve all of their data instead of making them fetch their data from 4 different places and combine it on their side. GraphQL serves as an API gateway.

GraphQL is an amazing abstraction layer for your API enpoints. You can abstract many different systems without exposing these to the consumer of your API. Another benefit is that you can point your API consumers to a single, self-documenting, place to retrieve all of their data instead of making them fetch their data from 4 different places and combine it on their side. GraphQL serves as an API gateway. This utility web server looks a lot like the two filter applications I developed in the first two weeks of using Go. It's processing larger amounts of data much more efficiently than PHP could and returns only that which PHP needs to perform its tasks. This web server improved the runtime of this particular process from 10-20 seconds to 3-4ms.

This utility web server looks a lot like the two filter applications I developed in the first two weeks of using Go. It's processing larger amounts of data much more efficiently than PHP could and returns only that which PHP needs to perform its tasks. This web server improved the runtime of this particular process from 10-20 seconds to 3-4ms.